You can publish 200 AI posts and still feel invisible in Google: impressions creep up, clicks stay flat, and your “content engine” turns into a cleanup project. That pattern is common with an autoblogging AI writer workflow because volume is easy to automate and SEO decisions are not. If your tool isn’t choosing keywords with a realistic ranking path, reacting to Google Search Console, and controlling cadence, you end up with overlapping URLs and pages that never earn stable queries.

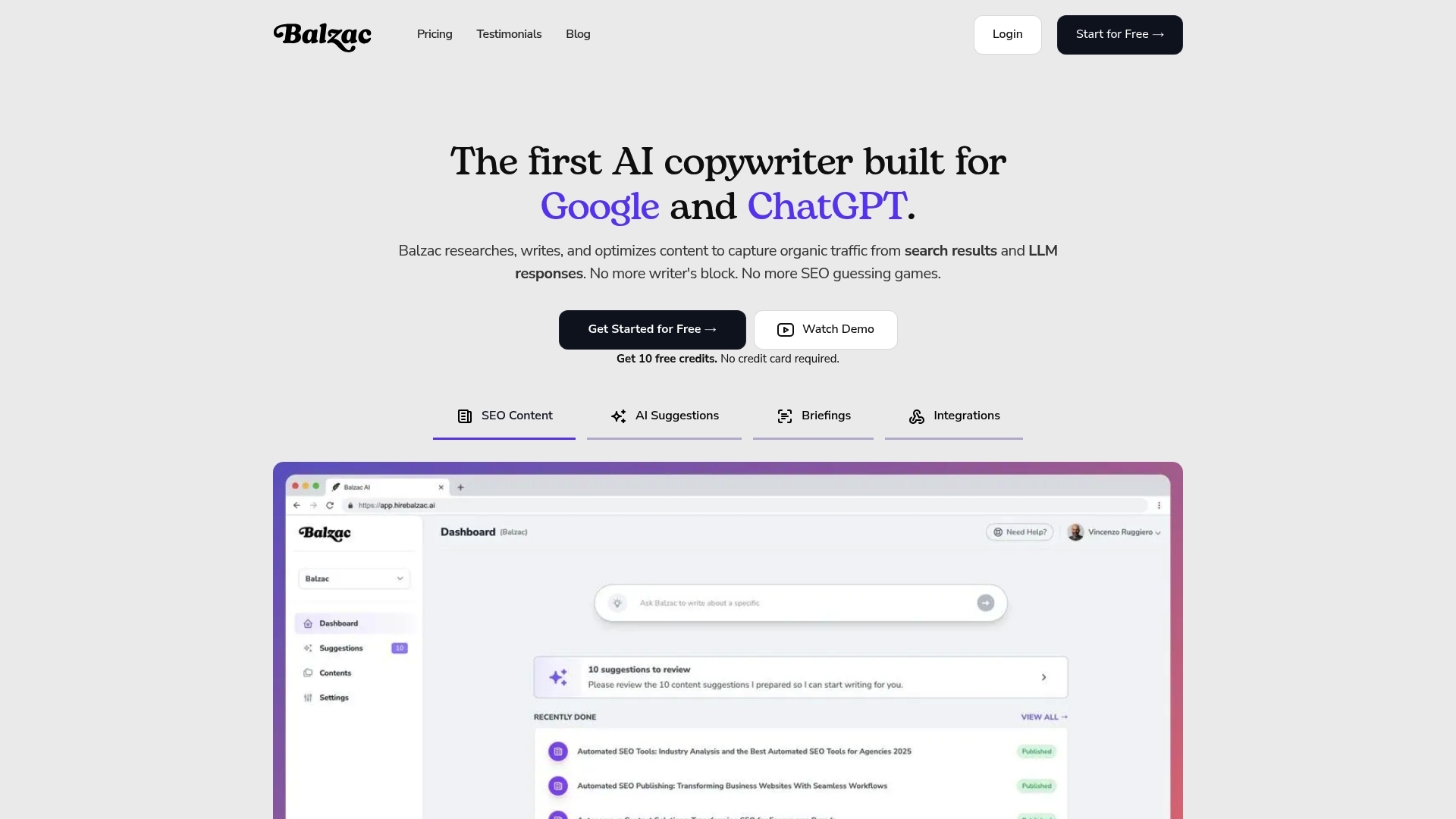

That is the real split in Autoblogging AI vs Balzac. Autoblogging.ai fits when you want fast output and you can live with hit-or-miss search performance. Balzac is built for people who already tried bulk publishing and want an autoblogging alternative that behaves like an SEO operator: it picks targets based on demand, learns from GSC outcomes, and publishes in a way that avoids cannibalization and index bloat.

| Feature | Autoblogging.ai (Typical Bulk Workflow) | Balzac (Autonomous SEO Agent) |

|---|---|---|

| Keyword Research | User-supplied topics and prompts, often broad | Keyword selection guided by SEO targets and competitor patterns |

| GSC Feedback Loop | Usually manual, outside the tool | Uses Google Search Console signals to steer what gets written next |

| Scheduling | Publish immediately or in bulk batches | Planned cadence to reduce cannibalization and quality dips |

| Internal Links | Often minimal or template-based | Structured internal linking aligned to a topic map |

| Editing Control | Draft output with optional tweaks | Brief-driven writing with tighter SERP intent matching |

| Publishing | CMS posting automation | Automatic publishing to major CMS platforms |

| Reporting | Content output metrics, limited SEO outcome tracking | Ranking and performance-oriented tracking |

| Pricing Approach | Typically volume-based (more posts, more cost) | Typically outcome and strategy-driven automation |

1. Keyword Targeting: Random Topics vs Search Demand

Keyword targeting is where most “publish 100 posts fast” workflows fall apart. In the autoblogging AI vs Balzac debate, the difference starts before a single word gets generated: Autoblogging.ai-style bulk pipelines often pick topics because they are easy to produce, not because people search for them.

That creates a predictable failure pattern. You end up with pages that have no real query demand, no clear primary keyword, and no realistic path to page one. Google can still index them, but indexing is not the same as ranking.

How Balzac Chooses Keywords With Ranking Potential

Balzac treats keyword selection like an SEO operator would. It builds a plan around search demand and intent, then writes to that plan. The goal is simple: publish fewer posts that can win specific queries instead of flooding your site with “maybe someone will search this” articles.

In practice, intentional targeting looks like this:

- Starts from a topic map (clusters and supporting pages), so each post has a job: rank, support an internal link hub, or capture long-tail variations.

- Assigns one primary query per page, then uses close variants as secondary terms, so the page has a clear relevance signal.

- Filters out dead keywords (no impressions, no clicks, unclear intent), which prevents wasted content and index bloat.

- Accounts for site fit, a new site targets lower difficulty long-tail queries, an established site can push into more competitive head terms.

A bulk autoblogging AI writer usually does the opposite. It expands a seed topic into dozens of loosely related posts, repeats the same angle, and accidentally creates keyword cannibalization. Two or five pages fight for the same query, so none of them become the obvious best result.

If you are looking for an autoblogging alternative because your traffic flatlined after publishing at scale, start by auditing your keyword list. Pull queries and impressions in Google Search Console, then ask one question per URL: “What exact search term should this page win?” If you cannot answer in one sentence, the topic was probably random, not demand-driven.

2. SEO Feedback Loop: GSC-Driven Decisions vs Set-and-Forget

That “one question per URL” test is the start of an SEO feedback loop. The difference in Autoblogging AI vs Balzac shows up right after publishing: do you look at Google Search Console signals and change the plan, or do you keep shipping the same templates and hope volume wins?

A real feedback loop uses Google Search Console (GSC) metrics as inputs for the next batch of pages. GSC gives you the only first-party view of what Google actually surfaced your pages for: queries, impressions, clicks, and average position. When those numbers move, your content strategy should move with them.

What A GSC-Driven Loop Actually Does

Balzac’s approach treats each published URL like an experiment with measurable outcomes. Instead of a static autoblogging pipeline, you iterate based on what GSC reports for that exact page and cluster.

- Pull page-level queries. In GSC Performance, filter by Page, then review the Queries tab. Look for “accidental keywords” where you get impressions but the page was not written to win that term.

- Decide if the page has a ranking path. Impressions with average position 8 to 20 usually signal a near-win. Update the page to match the query’s intent and add missing sections that top results include.

- Fix CTR before writing more. If you sit at positions 1 to 5 with low clicks, rewrite the title tag and meta description to match the query language. Google does not promise to show your meta description, but stronger titles still lift clicks.

- Prevent cannibalization. If two URLs show the same primary query in GSC, merge them or retarget one page. Cannibalization is common after using an autoblogging AI writer that publishes many similar posts.

- Feed winners into the topic map. When a page earns impressions for a subtopic, create supporting articles and internal links around it. This is how you turn one ranking into a cluster, instead of a one-off post.

Set-and-forget autoblogging usually stops at “publish and move on.” That means you miss obvious signals: pages stuck at positions 30 to 60 often need a different keyword, not more words. Pages getting impressions for the wrong query need a rewrite, not another 100 posts.

If you want an autoblogging alternative that behaves like an SEO operator, connect publishing to GSC outcomes. Google documents these reports and metrics in its own Search Console help center, which is the reference point for queries, impressions, clicks, and position definitions: Google Search Console Performance report.

3. Content Briefing: SERP Intent Matching vs Generic AI Drafts

Google Search Console can tell you which queries a page shows for, but your content brief decides whether the page deserves clicks. This is where an autoblogging alternative like Balzac separates itself from an Autoblogging.ai-style bulk pipeline. Bulk generation tends to start with a prompt and hope the SERP fits. Balzac starts with the SERP and writes a page that matches what already ranks.

SERP intent matching means your page answers the same job the top results answer. If the query is “best X,” Google often rewards list formats with comparison sections. If the query is “how to,” Google often rewards step-by-step instructions with clear prerequisites. Generic AI drafts often miss this and produce a middle-of-the-road explainer that satisfies nobody.

What A SERP-Based Brief Includes (And Generic Drafts Usually Skip)

In the autoblogging ai vs balzac comparison, the biggest on-page difference is briefing depth. A useful brief pulls repeatable patterns from the first page of Google and turns them into requirements.

- Intent type and format: listicle, tutorial, definition, template, calculator, or product page. You can validate this by scanning the top 10 results in Google.

- Primary angle: “budget,” “for beginners,” “2026,” “for small businesses,” or “for WordPress.” This angle often appears in titles and H1s across winners.

- Heading blueprint: the sections users expect, such as “pricing,” “pros and cons,” “setup steps,” “alternatives,” or “troubleshooting.”

- Differentiation requirement: a unique example, dataset, screenshot, or workflow. Without this, an autoblogging AI writer produces content that looks interchangeable across sites.

- Internal link targets: which hub page to support and which supporting pages to reference, so the post has a role in your topic map.

Competitor patterns matter because Google already tested them at scale. You do not copy competitors; you copy the structure that satisfies the query.

When you publish generic drafts in bulk, you usually get the same failure mode: the page ranks briefly for low-value long tails, then stagnates because it never matches intent tightly enough to earn clicks. Balzac’s brief-driven approach aims to prevent that by designing the page around the SERP before generation.

4. Publishing Strategy: Smart Cadence vs Content Flooding

Publishing cadence determines whether Google sees your site as a focused resource or a noisy archive. That is why an autoblogging alternative often wins or loses on scheduling, not writing quality. When an autoblogging AI writer pushes 100 posts fast, you usually ship overlapping topics before you know which pages deserve attention, links, or updates.

Google can crawl a flood of URLs, but your site still pays the cost: more low-performing pages to maintain, more similar pages competing for the same queries, and more internal links spread thin. You also lose the chance to use early ranking signals to decide what to publish next.

What Smart Cadence Looks Like in Autoblogging AI vs Balzac

In autoblogging AI vs Balzac, the practical difference is timing. Bulk pipelines publish based on throughput. Balzac publishes based on what the site can support, what the topic map needs next, and what Google Search Console signals suggest you should double down on.

A smart cadence is not “slow.” It is controlled. Use a schedule that leaves room for indexing, early impressions, and quick edits.

- Start with one cluster at a time. Publish a pillar page, then 2 to 6 supporting articles over the next days or weeks. Link them together immediately so Google understands the cluster.

- Wait for signals before scaling. Give each batch time to earn impressions in Google Search Console. If pages sit at position 40+, fix intent or targeting before publishing more similar URLs.

- Cap daily publishes on small sites. New or low-authority domains often do better with a steady trickle than a spike. A spike can create a backlog of thin pages that never get revisited.

- Schedule updates as first-class work. Plan rewrites for pages that get impressions but low CTR. Plan merges when two URLs show the same main query in GSC.

- Align internal links with release order. Publish supporting pages after the hub exists, so every new URL can point to a stable target.

Content flooding feels productive because word count climbs. Smart cadence feels slower because it forces decisions: which pages deserve a rewrite, which should merge, and which keywords you should stop chasing. That is the scheduling gap between a typical autoblogging AI writer workflow and a strategy-driven agent like Balzac.

5. Ranking Outcomes: Why Bulk AI Content Often Flatlines

Bulk publishing creates the illusion of progress, then rankings stall. If you searched for an autoblogging alternative after using an autoblogging AI writer, you probably hit the same wall: lots of URLs, little sustained movement in Google.

“Flatline” usually comes from four mechanics that bulk pipelines trigger at scale. None of them require a penalty. They are self-inflicted relevance problems.

Where Bulk Autoblogging Breaks Ranking Momentum

- Index bloat: You publish hundreds of near-zero value pages. Google spends crawl budget and indexing attention on URLs that never earn impressions or links. In Google Search Console, you often see many pages in “Crawled - currently not indexed” or “Discovered - currently not indexed.” Those statuses are common when sites ship thin pages faster than Google wants to evaluate them.

- Thin differentiation: Many Autoblogging.ai-style outputs share the same outline, same definitions, and same generic examples. Google sees interchangeable pages across the web and has no reason to rank yours. You can spot this by comparing your top 20 posts. If the first 200 words read like they came from the same template, so does the rest.

- Keyword cannibalization: Bulk workflows expand one seed topic into dozens of overlapping posts. Two to five URLs target the same query intent, so Google rotates them, then settles on none. In GSC, cannibalization looks like multiple pages getting impressions for the same query, with unstable average position and low clicks.

- Weak internal linking: Template links (home, category, latest posts) do not build topical authority. Without hub pages and deliberate anchors, your best articles sit isolated. That makes it harder for Google to understand which page is the primary answer for a cluster.

Balzac avoids these failure modes by treating publishing as a controlled system: fewer URLs, clearer primary queries, a topic map that dictates internal links, and a feedback loop that merges or rewrites pages when GSC shows overlap.

If you want to diagnose your own flatline fast, start inside GSC: find queries that trigger multiple URLs, then merge, redirect, or retarget until one page owns each intent. Google’s documentation on indexing statuses is the reference point for what those labels mean: Google Search Console Indexing report.

6. What Is the Best Autoblogging Alternative for Real SEO Growth?

The best autoblogging alternative for real SEO growth is the approach that picks keywords with a ranking path, measures outcomes in Google Search Console, and publishes at a cadence your site can support. If your current autoblogging AI writer workflow creates dozens of URLs per week, you usually trade short-term output for long-term cleanup: cannibalization, index bloat, and pages that never earn stable queries.

Use your site situation to choose the right path.

Best Autoblogging Alternative by Site Scenario

- Brand-new site (0 to low traffic): Avoid bulk posting. Start with one tight topic cluster, publish a pillar page plus a handful of supporting pages, then wait for impressions. A new domain needs clear topical focus and internal links more than volume. Balzac fits this scenario because it plans around a topic map and can keep output controlled while you learn what Google surfaces your pages for in GSC.

- Existing site with some traffic (already has GSC data): Let GSC pick your next content. Find pages sitting around average position 8 to 20 for valuable queries, then update them and publish supporting articles that strengthen the cluster. This is where Autoblogging AI vs Balzac becomes practical: a bulk pipeline keeps generating new URLs, while a strategic agent uses queries, impressions, clicks, and position to decide what to write and what to refresh.

- Niche authority site (clear expertise, monetization, and links): Prioritize depth and differentiation. Publish fewer pages, but make each page the best result for a specific intent. Add original examples, comparisons, and internal links that push authority to money pages. Bulk autoblogging often repeats the same angle across many posts, which makes it harder for any single URL to become the obvious winner.

If you want measurable growth, pick the workflow that forces one primary query per URL, then proves progress in GSC over 2 to 6 weeks. When a page earns impressions but low clicks, rewrite the title and expand the sections that match top-ranking pages. When two URLs trigger the same query, merge or retarget before you publish more.

7. How Do You Switch From Autoblogging.ai to Balzac Without Losing Traffic?

If your site has 50 to 200 Autoblogging.ai posts live, switching tools feels risky. The risk is real, but it is manageable if you treat the change like a controlled SEO cleanup. This checklist keeps traffic stable while you move to an autoblogging alternative that publishes based on keywords, intent, and Google Search Console outcomes.

Migration Checklist From Autoblogging.ai to Balzac

- Export a full URL inventory. Pull all indexable URLs from your CMS sitemap, then crawl the site with Screaming Frog SEO Spider (a desktop site crawler) to capture status codes, canonical tags, word count, titles, and internal links.

- Pull performance per URL from Google Search Console. In GSC Performance, export Pages with clicks, impressions, average position, and top queries. This becomes your keep, improve, merge, remove decision sheet.

- Classify every page into four buckets.

- Keep: earns clicks, ranks top 20 for a clear query, or has strong links.

- Improve: gets impressions (often positions 8 to 30) but misses intent or has weak CTR.

- Merge: overlaps with another URL on the same primary query (cannibalization).

- Remove: near-zero impressions over time, no unique angle, no links, no strategic fit.

- Prune safely with redirects, not deletions. When you remove or merge, 301 redirect the old URL to the best matching page. Use WordPress Redirection (a popular redirect manager plugin) or your host/CDN rules. Keep the redirect map in a spreadsheet.

- Build a topic map before you publish again. Define cluster hubs and supporting pages, then assign one primary query per URL. This step prevents the same overlap that bulk autoblogging creates.

- Fix internal links in the same pass. Add contextual links from supporting pages to the hub page, and between closely related supporting pages. Update anchors so they match the target query, not generic “click here.”

- Restart publishing with a controlled cadence. Publish cluster-by-cluster. Wait for indexing and early impressions, then adjust briefs based on what GSC shows for queries and positions.

- Measure the migration, not output volume. Track in GSC: total clicks, total impressions, and the count of pages with impressions. Watch the Indexing report for spikes in “Crawled - currently not indexed.” Reference: Google Search Console Indexing report.

If you follow the sheet, redirects, and topic map in that order, you stop the bleed from index bloat and cannibalization first. Then Balzac can publish into a structure Google can understand.

FAQ: Is Autoblogging AI Worth It for New Sites in 2026?

If you are choosing between an autoblogging alternative and a bulk publisher for a new domain, start with one reality: Google can index a lot of pages, but it rarely rewards a brand-new site for publishing hundreds of near-identical articles. New sites win by proving topical focus, clean internal links, and pages that match the query intent from day one.

Quick Answers New Site Owners Ask in 2026

- Will Autoblogging.ai content get indexed? Sometimes. In Google Search Console, new sites that publish at scale often stack URLs in Discovered - currently not indexed or Crawled - currently not indexed. Those statuses mean Google found the URL, but did not commit to indexing it. Reference: Google Search Console Indexing report.

- What quality threshold does Google expect? Google does not publish a word-count rule. It wants pages that satisfy the query better than alternatives. A practical threshold is: one primary query per URL, a SERP-matching structure (list, tutorial, definition), and information that is specific to your site or audience. Generic definitions and recycled sections usually underperform.

- How many posts should a new site publish? Start smaller than you think. Most new sites do better with one tight cluster (1 pillar page plus 4 to 8 supporting pages) than 50 loosely related posts. That cluster gives you a clear internal linking graph and a clean way to measure what earns impressions.

- How long does it take to rank? Expect weeks, not days. Use Google Search Console to watch impressions and average position first, then clicks. If you see impressions and positions improving, you have a path. If you see zero impressions across a cluster, your targeting or intent match is off.

- When does automation help vs hurt? Automation helps when it follows a topic map, avoids cannibalization, and schedules time for rewrites based on GSC queries. Automation hurts when it floods your site with overlapping URLs, weak internal links, and template content that reads the same across posts.

If you still want an autoblogging AI writer for a new site, use it like a production engine after you set constraints. Balzac fits that “constrained automation” approach because it treats keyword selection, internal links, and publishing cadence as part of the system, instead of treating content volume as the goal.

Conclusion: Quality-Over-Quantity Wins for Autonomous SEO

Constrained automation is the decision point in Autoblogging AI vs Balzac. If you treat publishing volume as the goal, you will keep paying the same tax: overlapping URLs, weak intent match, and a Google Search Console graph that moves sideways.

Use this decision rule and stick to it: if you cannot name the primary query, the ranking path, and the internal link role for a page before it goes live, do not publish it. That is the difference between an autoblogging AI writer workflow and an autoblogging alternative built for SEO outcomes.

A Simple Rule for Choosing Volume or Strategy

Autoblogging.ai-style bulk generation makes sense when content output matters more than search performance. Examples include low-stakes topical coverage, internal documentation, or short-lived campaigns where rankings are optional.

Balzac fits when you want autonomous SEO that behaves like an operator: it targets keywords with demand, uses Google Search Console signals to choose what to publish next, and keeps cadence controlled so one page can actually win an intent.

If you want the next step that creates results fast, do this today:

- Open Google Search Console and export your top 50 pages by impressions.

- Pick 10 pages sitting around positions 8 to 30 with clear commercial or lead value.

- Fix cannibalization first: if two URLs share the same main query, merge or retarget one.

- Rewrite for the query you already earned: add the missing sections you see across the current top results, then tighten the title to match query language.

- Publish one supporting cluster around the best near-win page, then link every new article back to that hub.

Run that loop for 2 to 6 weeks and watch what changes: the count of pages earning impressions, average position for your near-wins, and clicks that come from pages you improved instead of pages you flooded into existence.

If you want automation that compounds, pick the system that forces hard choices before publishing. Google rewards clarity. Your content engine should too.