Your team can ship ten SEO drafts overnight. Then they sit in “Needs review” for two weeks because someone has to verify claims about pricing, security, or what the product actually does.

That bottleneck is the real story in Ai seo vs traditional seo for SaaS teams. AI SEO can automate topic discovery, briefs, drafting, on-page cleanup, internal link suggestions, even pushing pages into WordPress or Webflow. Traditional SEO can reach the same end state, but it usually gets there through spreadsheets, handoffs, and expensive production time.

This matters because SaaS content programs rarely fail on strategy alone. They fail on throughput: keyword research piles up, writing cycles drag, on-page hygiene slips, and refresh work never makes it onto the calendar. Automation changes the cost and speed of production, but it also raises the stakes on governance. When output is easy, QA rules decide whether you build trust or publish liabilities.

Below, you’ll see where AI automation actually changes workflows and unit economics, where humans still have to own judgment, and how to choose AI SEO, traditional SEO, or a hybrid based on the pages you publish and the risk your business can tolerate.

How Does AI SEO Content Automation Work End to End?

In AI SEO, automation changes the work by turning a messy, people-dependent content pipeline into a repeatable system with clear approval gates. The bottleneck usually moves to human review, because the machine can keep producing drafts, but your team still owns accuracy, positioning, and risk.

End-to-end AI SEO content automation typically runs as a loop, not a one-time project:

- Topic discovery and prioritization: The system pulls keyword ideas from sources like Google Search Console, competitor SERPs, and tools such as Ahrefs (SEO backlink and keyword research) or Semrush (SEO suite). It clusters queries by intent, then scores them by difficulty, traffic potential, and product fit. Human handoff: approve the topic list and decide what not to publish (brand, legal, strategy).

- Keyword mapping and content brief generation: Automation assigns a primary query, secondary variations, and a page type (comparison page, integration page, how-to). It drafts an outline, headings, and recommended internal links. Human handoff: sanity-check intent, confirm the angle, and add product specifics.

- Drafting with constraints: The model writes a first draft using house style rules, required entities, and banned claims. Strong systems also generate metadata (title tag, meta description) and schema suggestions. Human handoff: SME review for technical accuracy, compliance, and “does this match how we actually sell?”

- On-page optimization and internal linking: Automation checks headings, term coverage, image alt text, and link targets. It proposes anchors and updates older pages to link forward. Human handoff: approve high-impact link changes so you do not break positioning on revenue pages.

- CMS publishing and formatting: The system converts the draft into CMS-ready HTML, adds images, and schedules publishing in WordPress, Webflow, or Contentful. Human handoff: final editorial pass, brand voice, and layout QA.

- Monitoring and refresh cycles: Automation watches indexation, rankings, clicks, and decay, often via Google Search Console and Google Analytics 4. It queues refreshes when impressions rise but CTR drops, or when rankings slide. Human handoff: approve major rewrites, especially when product messaging changed.

Where SaaS Teams Keep Humans in the Loop

The safest pattern for Ai seo vs traditional seo in SaaS is simple: automate everything that looks like production work, keep humans on decisions that create risk. That usually means approvals for topic selection, claims about security or compliance (SOC 2, HIPAA), pricing, and any page that sales uses in late-stage deals.

Side-by-Side Workflow: AI SEO vs Traditional SEO (Comparison Table)

Approvals for sensitive claims (SOC 2, HIPAA, pricing) create the main human “gates” in both models. The difference in AI SEO vs manual execution is what happens between gates: Ai seo vs traditional seo changes who does the production work, how repeatable it is, and how fast a team can move from idea to indexed page.

| Workflow Stage | AI SEO (Automated Content Ops) | Traditional SEO (Manual Content Ops) |

|---|---|---|

| Topic Discovery | Agent pulls competitors and SERPs, clusters keywords, flags gaps using tools like Semrush or Ahrefs. | Strategist exports keywords, filters in spreadsheets, reviews SERPs manually, then prioritizes in a backlog. |

| Keyword Mapping | System assigns primary and secondary terms to URLs, checks cannibalization against the sitemap. | Team maps keywords to pages in a sheet, often misses overlap until rankings flatten. |

| Briefs | Auto-creates briefs from SERP patterns, headings, People Also Ask, and intent notes, human approves. | SEO lead writes briefs in Google Docs, then revises after writer questions and editor feedback. |

| Outlines | Generates section structure, FAQs, and examples, editor adjusts for product positioning and POV. | Writer or strategist creates outline from scratch, approval cycles vary by stakeholder availability. |

| Drafting | Drafts in minutes, then humans add product specifics, screenshots, and verified claims. | Writer drafts over days, SMEs review late, rewrites increase cycle time. |

| On-Page Optimization | Writes titles, meta descriptions, H1-H3 structure, schema suggestions, and checks keyword coverage. | SEO specialist audits after the draft, fixes headings, metadata, and schema by hand. |

| Internal Linking | Suggests anchors and targets based on topic clusters and existing pages, can insert links automatically. | Editor adds a few links from memory, or runs a separate linking audit later. |

| Publishing | Formats to CMS blocks and can publish to WordPress, Webflow, or Contentful after approval (some agents, like Balzac, do this end to end). | Marketing ops copies from Google Docs, fixes formatting, adds images, then schedules manually. |

| Refresh Cycles | Monitors ranking drops and content decay signals, proposes updates and republishes on a cadence. | Refreshes happen when someone notices a traffic drop in Google Search Console or GA4. |

| Reporting | Auto-tracks indexation, impressions, clicks, and rankings, then annotates changes by publish date. | Analyst pulls reports from Google Search Console and Looker Studio, then writes a summary. |

The table hides an important operational detail: AI SEO reduces handoffs. Traditional SEO adds coordination work each time a task moves from strategist to writer to editor to CMS publisher. Automation collapses those steps into one pipeline, then concentrates human time where mistakes create real risk.

Speed vs Consistency: What Actually Limits Output Even With AI?

AI SEO makes drafting and on-page work fast, but it does not automatically give SaaS teams a reliable publishing cadence. In Ai seo vs traditional seo, output usually hits a ceiling at the same place: human approvals. The machine can produce ten drafts overnight. Your team still has to decide which ones are safe, accurate, and on-message.

The real constraint is consistency. Speed without repeatable review rules creates content debt, pages that need rewrites, sales enablement that stops trusting the blog, and legal escalations that slow everything down.

Where AI SEO Pipelines Still Bottleneck

- SME review: Product, engineering, and security teams do not have time to read 2,000-word drafts. They will block on anything that touches architecture, performance claims, “how it works,” or competitor comparisons.

- Compliance and legal: SOC 2, HIPAA, GDPR, and financial claims create hard approval gates. If your AI SEO system writes “we are HIPAA compliant” without a source, you now have a risk incident, not a content problem.

- Brand voice and positioning: AI can follow style rules, but it cannot resolve internal disagreements about messaging. When product marketing changes the narrative, every queued draft becomes stale.

- Design and CMS QA: Publishing automation helps, but teams still catch broken tables, incorrect CTAs, and formatting issues in WordPress, Webflow, or Contentful.

- Distribution and measurement: If nobody owns Google Search Console triage, internal linking updates, and refresh decisions, pages ship and then decay.

SaaS teams remove these bottlenecks by treating AI SEO like manufacturing: define gates, define owners, then let the system run.

- Create a two-tier content risk policy: auto-publish low-risk how-to content, require review for security, pricing, compliance, and “best X software” comparisons.

- Use SME time for deltas, not full reads: require the AI draft to highlight claims, numbers, and product assertions in a short checklist for approval.

- Lock a style and claims rulebook: keep an approved messaging doc, a list of forbidden promises, and a source requirement for any stat.

- Batch approvals: schedule one 30 to 45 minute weekly review slot per function (product, security, legal) instead of ad hoc pings.

Tools that behave like autonomous agents, including Balzac, help most when they keep the queue organized, enforce constraints, and route drafts to the right approver. Humans still decide what the company is willing to claim in public.

Cost Model Breakdown: Tools, Headcount, Agencies, and the New AI Curve

Approval gates cost money. In AI SEO, you pay for judgment and risk control. In traditional SEO, you also pay for production labor and coordination. That difference drives the biggest cost gap in Ai seo vs traditional seo: the marginal cost of publishing the next page.

Traditional SEO cost piles up in three places: people, handoffs, and tooling. A typical SaaS content pipeline touches an SEO strategist, a writer, an editor, a designer (for images), and sometimes a developer (for templates, schema, or page speed fixes). Each handoff adds meetings, revisions, and waiting time. Tools like Ahrefs or Semrush add recurring cost, and agencies usually bundle labor plus markup into a monthly retainer.

AI SEO shifts spend from per-asset labor to platform cost plus review. The agent handles repetitive work: keyword clustering, briefs, first drafts, metadata, internal link suggestions, and CMS formatting. Humans still review claims, add product reality, and approve sensitive pages (security, compliance, pricing). The result is a cost curve that flattens as volume increases.

Where Automation Reduces Cost Per Published Page

Automation reduces marginal cost when it removes paid hours from the repeatable steps:

- Research and briefing: fewer strategist hours spent exporting keywords, clustering, and writing briefs.

- Draft production: fewer writer hours per page, especially for long-tail how-tos, integration pages, and comparison pages.

- On-page hygiene: fewer specialist hours fixing titles, headings, alt text, and basic schema suggestions.

- Internal linking and refreshes: fewer quarterly “cleanup projects” because the system proposes links and updates continuously.

- Publishing ops: fewer marketing ops hours copying from Google Docs into WordPress, Webflow, or Contentful.

The hidden savings come from fewer coordination cycles. Traditional SEO burns time when SMEs review late and editors rewrite for positioning after the draft exists. An AI SEO workflow that enforces constraints early—required entities, disallowed claims, brand voice rules—reduces rework.

Budget still matters for quality. If you cut human review to zero, you often trade labor savings for brand and compliance risk. The best SaaS programs use AI to produce volume, then spend human time where it changes outcomes: product screenshots, first-party data, differentiated POV, and conversion-focused updates tied to pipeline metrics in Google Analytics 4.

Quality and Differentiation: How SaaS Teams Avoid Generic AI Content

Product screenshots and first-party metrics are the fastest way to separate AI SEO from generic content farms. A model can synthesize what already exists on the SERP. It cannot know how your SaaS behaves in production, what customers struggle with in onboarding, or which feature actually wins deals. In Ai seo vs traditional seo, quality comes from inputs, not from the drafting speed.

AI fails in predictable ways when teams skip those inputs: it repeats competitor phrasing, overpromises on security or performance, and writes “best practices” pages that never mention your product’s workflow. Google can index that content, but readers bounce because it feels interchangeable.

Inputs That Make AI SEO Content Defensible

- Product truth: add the exact UI steps, limitations, and defaults. “Click Settings → API Keys” beats generic setup advice. Keep a shared “product facts” doc (features, integrations, pricing rules, support SLAs) that the system must follow.

- First-party data: use what you already have. Pull onboarding drop-off points from Amplitude or Mixpanel (product analytics), pipeline conversion from HubSpot or Salesforce, and query patterns from Google Search Console. Even one chart or table sourced from internal data makes the page hard to copy.

- Opinionated POV: write the “why” your team believes. Example: “We recommend event naming conventions that match billing objects because it reduces reporting rework.” AI can draft structure, but a human needs to set the position.

- Named entities and concrete examples: cite real standards and artifacts (SOC 2 Type II, ISO 27001, OpenAPI, SAML, SCIM) only when they are true for your product. Add customer-facing artifacts like a redacted runbook snippet, a sample dashboard, or a support checklist.

- Editorial rules that constrain claims: require sources for any statistic, ban “HIPAA compliant” unless legal approves, and force “who this is for” sections so intent stays tight.

The practical move for SaaS teams is to treat AI drafts as a base layer. Then humans add the parts that create differentiation: screenshots, real configuration details, and numbers tied to your funnel in Google Analytics 4.

Autonomous agents like Balzac help when they enforce those constraints at scale, for example by templating required sections, flagging unapproved claims, and reusing a controlled product fact set across hundreds of pages.

What Do Google’s Helpful Content Signals Mean for AI SEO in 2026?

Google does not grade content on whether a human or a model typed it. Google grades outcomes: does the page satisfy the query, show credible experience, and avoid scaled spam patterns. For AI SEO, that means your automation has to ship pages that read like they came from a real SaaS team with product context, not a generic template filled at scale. That is the operational core of Ai seo vs traditional seo in 2026: automation increases output, so QA rules decide whether you earn trust or create liabilities.

Google’s “helpful content” direction and E-E-A-T (Experience, Expertise, Authoritativeness, Trust) translate into publishing constraints you can enforce in an AI pipeline. Google documents these concepts in its Creating helpful, reliable, people-first content guidance and its E-E-A-T section.

Concrete Helpful Content QA Rules for Automated SaaS Publishing

- Prove experience on the page: require at least one product-specific artifact per article (UI steps, a screenshot callout, a config example, an actual workflow). If the draft cannot cite how the tool works, route it to SME review.

- Attach ownership: add an author profile with relevant role context (Product Marketing, Solutions Engineer). If your CMS supports it, add editor and reviewer fields for sensitive topics.

- Enforce claim hygiene: block unverified statements about SOC 2, HIPAA, GDPR, uptime, performance, pricing, and “best” rankings. Require a source link or an internal approved fact for every number.

- Match intent before keywords: make the brief declare the query intent (definition, comparison, setup, troubleshooting). Reject drafts that bury the answer under generic background.

- Control internal links like product UX: allow automation to suggest links, but restrict automatic insertion on money pages (pricing, security, demo) unless a human approves anchors and targets.

- Stop template footprints: vary examples, headings, and recommended tools across pages in the same cluster. Repeated section patterns across hundreds of URLs look like scaled production.

- Require a refresh trigger: set rules in Google Search Console and Google Analytics 4 for updates (CTR drops, impressions rise without clicks, ranking slips). Queue refreshes automatically, but keep a human gate for messaging changes.

Autonomous agents such as Balzac help most when they enforce these rules automatically: they can flag unapproved claims, require product artifacts, and block publishing until a page passes your “helpful content” checklist.

When Should You Choose AI SEO, Traditional SEO, or a Hybrid?

Your choice in AI SEO vs traditional execution comes down to risk tolerance and page type. If your “helpful content” checklist blocks publishing until claims, screenshots, and positioning are correct, you can automate aggressively. If the business cannot accept a single wrong sentence about compliance, security, or pricing, keep more of the workflow manual. That is the real decision in Ai seo vs traditional seo for SaaS teams.

- Choose AI SEO when you need volume and your topics are pattern-based: long-tail how-tos, glossary pages, integration explainers, feature pages that follow a template, and refreshes across dozens of decaying URLs.

- Choose traditional SEO when the content carries high downside risk or depends on original reporting: regulated industries, security assertions, medical or financial guidance, executive thought leadership, and deep technical architecture content.

- Choose a hybrid when you want scale but must protect accuracy: automate research, briefs, drafts, internal linking, and monitoring, then require human review on specific page classes.

Scenario-Based Decision Criteria for SaaS

High content volume and long-tail coverage: AI SEO wins when the backlog is the problem. If you have hundreds of “how to X in Y tool” queries and competitors publish weekly, automation keeps cadence stable. Use systems that connect to Google Search Console and Semrush so they can prioritize topics that already show impressions.

Regulated or high-liability categories: Traditional SEO, or a tightly governed hybrid, is safer for healthcare (HIPAA), finance, and any product that markets security controls (SOC 2 Type II, ISO 27001). Require legal and security signoff for any page that mentions certifications, data handling, encryption, retention, or breach response. Treat those statements like product documentation, not blog copy.

Thought leadership and category creation: Humans should drive the thesis, the contrarian angle, and the evidence. AI can still help by generating outlines, pulling counterarguments from SERPs, and formatting drafts, but a generic model output will not earn links from sites like TechCrunch or The Verge.

Technical accuracy and developer audiences: Use a hybrid. Let AI draft, then require engineering review for anything that touches APIs (OpenAPI), auth (OAuth 2.0, SAML), provisioning (SCIM), or performance. AI often gets edge cases wrong, and developers notice immediately.

Teams with limited headcount: AI SEO fits when you can commit to a repeatable review slot. A tool like Balzac makes sense if it can keep drafts queued, enforce your “no unapproved claims” rules, and publish to WordPress, Webflow, or Contentful only after approval.

AI SEO Implementation Checklist for SaaS Teams (Governance + QA)

A repeatable review slot only works if your AI SEO system has rules, owners, and stop conditions. Otherwise, Ai seo vs traditional seo becomes “faster chaos”: more drafts, more risk, and the same publishing bottleneck. Use this checklist to roll out automation without losing governance.

- Set the strategy boundary: write a one-page SEO charter that states your ICP, jobs-to-be-done, and page types you will publish (how-to, integration, comparison, alternatives). Define exclusions (medical advice, legal advice, unverified competitor claims).

- Create a topic approval gate: require a human to approve the weekly topic queue. Score each topic for product fit and risk (security, compliance, pricing, “best” claims). Auto-approve low-risk how-tos if you want volume.

- Lock a brand voice spec: document tone, terminology, capitalization, and banned phrases. Add “must include” blocks such as a short “Who This Is For” section and a product-specific example.

- Build a claim policy: maintain an approved fact set for pricing, uptime, security posture, and compliance. Block publishing if the draft includes SOC 2, HIPAA, GDPR, ISO 27001, or performance numbers without an internal source.

- Define reviewer ownership by risk: map topics to approvers (Product Marketing for positioning, Solutions Engineering for technical steps, Security or Legal for regulated claims). Keep a 24 to 48 hour SLA for reviews or the queue stalls.

- Standardize SME review to a delta checklist: require the system to surface (a) factual claims, (b) product workflow steps, (c) competitor mentions, (d) CTAs. SMEs approve the list, not a full read.

- Set CMS safeguards: publish through roles and permissions in WordPress, Webflow, or Contentful. Restrict automation from editing pricing, security, and demo pages. Require a staging preview for new templates, tables, and schema changes.

- Enforce internal linking rules: allow automatic links inside the blog, require human approval for anchors pointing to money pages (pricing, security, request-a-demo). Prevent link insertion that changes the primary keyword focus of an existing URL.

- Define refresh cadence and triggers: monitor Google Search Console for indexation, impressions, and CTR drops, and Google Analytics 4 for engagement and assisted conversions. Queue refreshes at 30, 60, and 120 days for new pages, then quarterly for winners.

- Instrument measurement before scaling: standardize UTM rules for CTAs, set GA4 conversions, and annotate publish dates in your reporting. Track: indexed pages, non-branded clicks, leads from organic, and conversion rate by page type.

Governance Tests Before You Increase Volume

- Red team one draft: try to force incorrect compliance or pricing claims. Your pipeline should block it.

- Audit 10 published pages: verify consistent voice, correct internal links, and accurate product steps.

- Run a rollback drill: confirm you can unpublish or revert within minutes if a page ships a risky claim.

Where an Autonomous SEO Agent Like Balzac Fits (and Where It Doesn’t)

Governance only works when the machine can execute cleanly inside your rules. That is where an autonomous agent like Balzac fits in AI SEO: it runs the production pipeline end to end, then pauses at the gates you define. In Ai seo vs traditional seo, this is the practical shift: you move human time from drafting and formatting to approval, differentiation, and risk control.

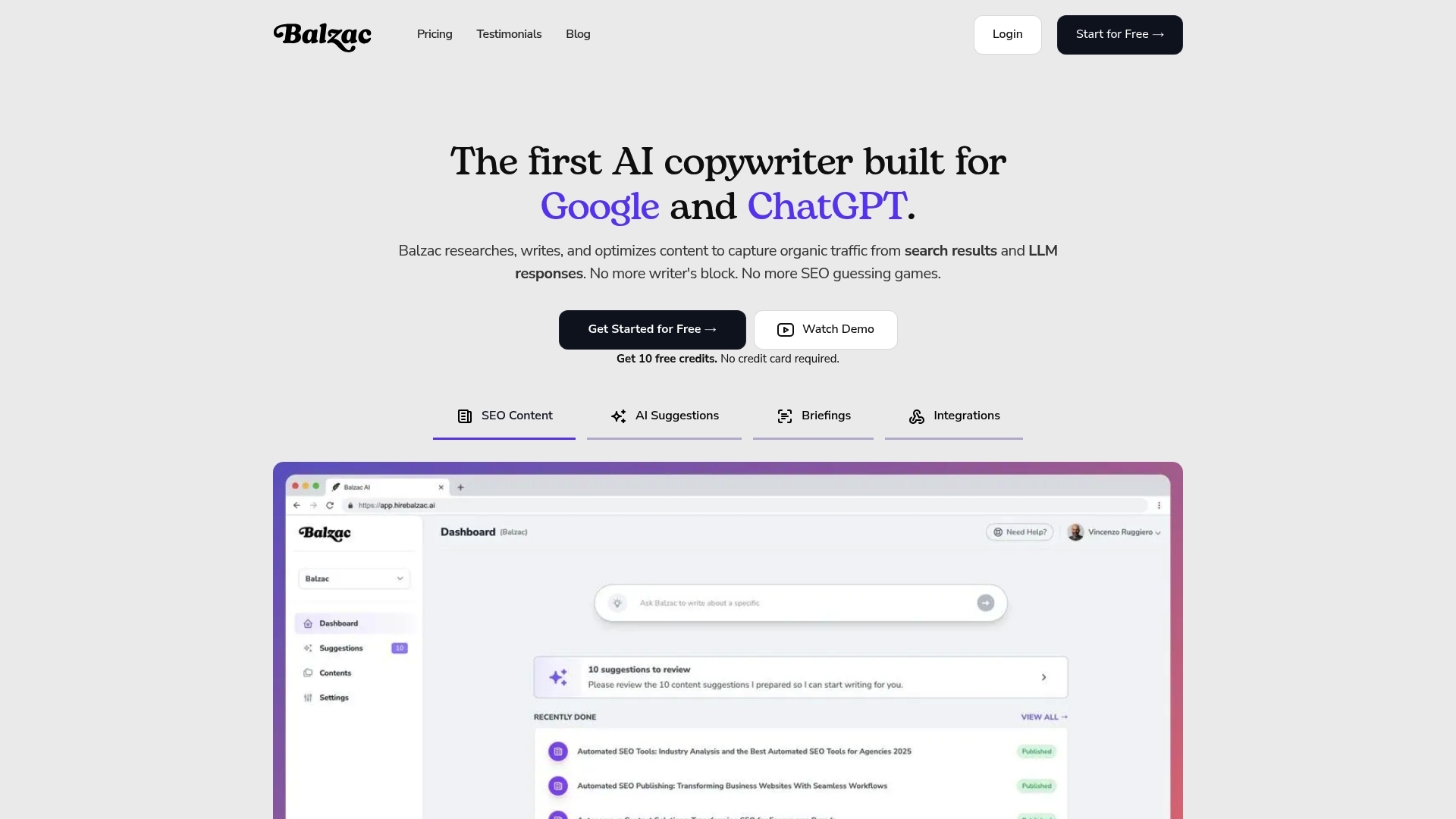

Balzac makes the most sense when your team already knows what “good” looks like and needs a system to produce it repeatedly: topic discovery from competitor SERPs, brief creation, SEO-focused drafting, internal linking suggestions, and CMS publishing. The value is operational. It keeps a queue moving without waiting on a writer schedule, and it standardizes the boring parts (metadata, headings, formatting) that teams often ship inconsistently.

Where Balzac Fits Best in AI SEO

- Long-tail scaling: high-volume how-tos, integration explainers, glossary pages, and template-driven feature pages where structure repeats and intent is clear.

- Refresh at scale: updating decaying URLs on a cadence using signals from Google Search Console and Google Analytics 4, with drafts queued for approval.

- Internal linking hygiene: consistent link suggestions across clusters, plus controlled insertion on low-risk pages.

- CMS operations: publishing to WordPress, Webflow, or Contentful without manual copy-paste and formatting drift.

Balzac fits less well when the “stop conditions” trigger constantly. If every page needs security, legal, or engineering signoff, the agent still helps with drafts and formatting, but your throughput depends on reviewers, not automation. The same is true for thought leadership that requires original reporting, executive voice, or claims you cannot validate from an approved fact set.

A simple way to deploy an autonomous agent safely is to separate page classes by risk:

- Auto-publish: low-liability educational content with strict claim rules and a required product artifact (UI steps, screenshot callout, config example).

- Review required: anything that mentions SOC 2, HIPAA, GDPR, pricing, SLAs, performance numbers, or competitor rankings.

If you want a concrete next step: pick one cluster (20 to 40 pages), write the claim and voice rules once, then run AI SEO with a weekly 45-minute approval slot. If the agent can ship that cluster without rework, you have a repeatable system. If it cannot, the blocker is not content speed; it is missing governance inputs.