Your AI writer can publish 30 posts this month and still move your organic traffic exactly zero. That’s the trap in the SEObot vs Balzac decision: one product is built to keep the content calendar full, the other is built to watch what Google is already showing you and react.

If you’re looking for a SEObot alternative, you probably already learned the obvious lesson: decent prose is cheap. The expensive part is picking winnable queries, connecting topics to Google Search Console reality, and updating pages when impressions show you where the next lift is. That’s where “autonomous SEO” either becomes a feedback loop or becomes scheduled posting.

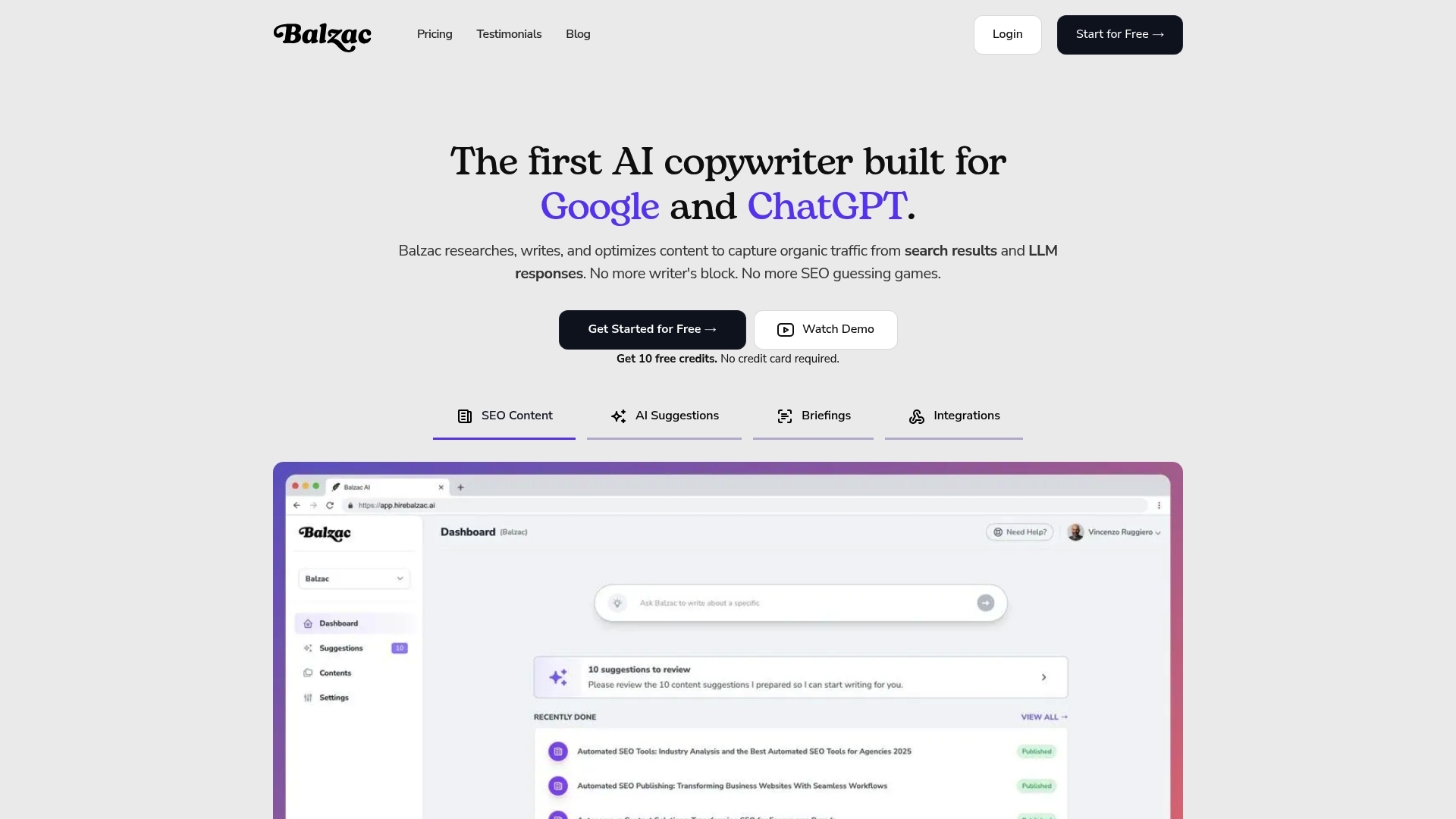

This guide breaks down the differences that matter for SaaS teams: how each tool chooses topics, what happens after a post goes live, how safe the publishing workflow is, and what developers can automate with Balzac’s CLI and API. If you care about compounding traffic instead of content volume, you’ll feel the gap fast.

Feature Comparison Table: SEObot vs Balzac at a Glance

Feedback loops show up as features. If you are evaluating a seobot alternative, this table makes the tradeoffs obvious: SEObot focuses on automated writing and publishing, Balzac behaves more like an autonomous SEO agent that reads performance signals and adjusts what it ships next.

| Capability | SEObot | Balzac |

|---|---|---|

| Google Search Console (GSC) Integration | Limited or indirect feedback loop for content decisions (no GSC-native optimization loop) | Direct GSC integration for query and page performance signals that guide topic selection and updates |

| Keyword Research And Topic Discovery | Lightweight topic generation, less emphasis on deep keyword research | Deeper keyword discovery and prioritization, designed to pick topics with clearer ranking paths |

| Keyword Tracking After Publishing | Basic monitoring, less iteration driven by rankings and impressions | Keyword tracking and ongoing iteration loop tied to performance changes |

| Content Refresh And Optimization | Primarily net-new content output | Refreshes and optimizations based on GSC signals (queries, CTR, impressions, page-level trends) |

| Autonomous Publishing | Automates posting, depending on your CMS setup | Automatic publishing with workflow controls (drafts, approvals, scheduling) depending on team preference |

| Workflow Controls | More “set it and publish” oriented | More “measure, decide, ship” oriented, with optional human review gates |

| Developer Tooling | Primarily UI-driven automation | CLI and API for programmatic control (pipelines, environments, bulk operations) |

| Best Fit | Teams that want fast content output with minimal configuration | SaaS teams that want autonomous SEO tied to rankings and clicks, not output volume |

| Pricing Signals | Varies by plan and volume (verify on SEObot’s pricing page) | Varies by plan and automation scope (verify on Balzac’s pricing page) |

People searching “seobot vs balzac” usually care about one question: does the system learn after publishing? If your content process lives or dies by Google Search Console data, Balzac is built around that loop. If you mainly need an “seobot ai” style content engine to fill a blog calendar, SEObot maps to that job more directly.

How To Read This Table As A Buyer

Start with GSC integration and post-publish tracking. Those two rows predict whether you will spend your time editing drafts or deciding what to write next. Then look at developer tooling. If you want content automation inside CI, scheduled jobs, or internal dashboards, a CLI and API matter more than another prompt template.

1. GSC-Driven Intelligence vs Content-Only Automation

SEObot vs Balzac starts with one question: do content decisions come from Google’s own feedback, or from a pre-publish content pipeline? Google Search Console (GSC) is the closest thing you get to “ground truth” for SEO because it shows what queries already trigger impressions, where you rank, and what pages earn clicks. Tools that treat GSC as a first-class input can pick better topics and fix the right pages faster.

Balzac positions itself as an autonomous SEO agent, so it uses GSC signals to steer what it writes and what it updates. SEObot is closer to content-only automation: it can generate posts, but it has a lighter measurement loop, so you often end up doing the analysis work outside the tool.

How GSC-Driven Automation Changes Content Decisions

GSC-driven intelligence means the system can react to what Google is already testing your site for. In practice, Balzac can use GSC data to prioritize pages that already have momentum, then push them over the edge with targeted updates. That is how you turn “we published 30 posts” into “these 6 pages moved from positions 8 to 3.”

Here are the specific GSC signals that matter for autonomous content decisions:

- Queries with high impressions and low CTR: rewrite titles and meta descriptions, then adjust intros to match intent.

- Queries ranking in positions 4-15: expand sections, add missing subtopics, and tighten internal links to improve relevance.

- Pages with decaying clicks: refresh content, update screenshots, and revalidate recommendations.

- New queries appearing for an existing URL: add a short section that answers the new intent instead of publishing a duplicate post.

Balzac’s approach fits teams that want a “closed loop” system: publish, measure in GSC, then iterate based on the same dataset Google uses to report performance. If you want to sanity-check what GSC reports, Google documents the metrics and quirks in its own Search Console Performance report guide.

With SEObot AI style workflows, many teams still end up exporting GSC, building a spreadsheet, and deciding manually which URLs deserve updates. That can work, but it shifts the most valuable part of SEO, the prioritization, back onto the human. If your goal is autonomy, GSC-driven decisions are the difference between automated drafting and automated growth.

2. Keyword Research Depth and Topic Selection Quality

Keyword research is where “autonomous” either becomes real or turns into scheduled posting. A seobot alternative needs a reliable way to find winnable queries, map them to pages, and avoid writing posts that never get impressions.

In practice, SEObot vs Balzac splits on how topics get chosen. SEObot tends to start from broad themes and generate posts from those inputs. That works when you already know what to write and you mainly want speed. It breaks down when you need the system to discover demand, filter out low-intent keywords, and prioritize based on what your site can realistically rank for.

Balzac approaches topic selection like an SEO operator. It uses Google Search Console signals (queries, impressions, clicks, CTR, and page performance) as a starting dataset, then expands into related opportunities. That matters because GSC shows what Google already associates with your site. You can turn “nearly ranking” queries into targeted pages instead of guessing from scratch.

What “Better Topic Selection” Looks Like Day to Day

The quality gap shows up in the decisions your system makes before it writes a single sentence. Here are the practical differences that affect ranking odds and relevance:

- Seed source: SEObot often relies on user-provided topics and lightweight generation. Balzac can start from your existing GSC query set, which is closer to real demand for your domain.

- Prioritization: SEObot’s workflow is usually “publish more.” Balzac can prioritize queries where you already earn impressions but sit in mid positions, because those terms often respond to on-page improvements and clearer intent match.

- Intent filtering: Topic generators that do not check SERPs tend to mix informational, navigational, and transactional intent. Balzac’s GSC-driven approach reduces that mismatch because the queries come from how users already find you.

- Cannibalization risk: When a tool creates many similar posts, it can split signals across overlapping URLs. A system that references existing pages and their queries can decide to refresh one URL instead of creating competing ones.

- Relevance to your product: “SEObot AI” style content pipelines can drift into generic content that reads fine but does not connect to your SaaS. GSC queries usually reflect your actual audience vocabulary, including feature terms and integration names.

If you want a quick gut-check, open Google Search Console and sort queries by impressions with low CTR. Those are often topic and title opportunities hiding in plain sight. Google documents this workflow in its Search Console training materials (Performance report overview).

Keyword research depth is not about generating longer lists. It is about selecting fewer topics with clearer intent, less overlap, and a tighter connection to what your site already ranks for.

3. Keyword Tracking and Iteration After Publishing

Post-publish is where “autonomous SEO” either compounds or stalls. In the SEObot vs Balzac decision, keyword tracking matters because it tells you whether the system can turn a good topic choice into a better ranking page over weeks, not hours. Without tracking, you ship content and hope. With tracking, you can spot which URLs are close to breaking through and update them with purpose.

After an article goes live, the questions get concrete: Which queries trigger impressions? Which keyword sits at position 11 with rising impressions? Which pages lost clicks after a SERP change? Those answers live in Google Search Console, and they drive the refresh backlog that actually grows traffic.

What “Iteration” Looks Like In Practice

Balzac treats iteration as a loop tied to performance signals. It can watch query and page trends in GSC, then propose or ship updates that target specific movements (CTR drops, position plateaus, new long-tail queries). That behavior matches how SEO teams operate in tools like Google Search Console, Ahrefs (an SEO backlink and keyword research tool), or Semrush (an SEO suite with rank tracking), except the update work becomes automated.

SEObot is closer to a content-only engine. It can publish consistently, but the iteration loop is lighter. Many teams end up doing the measurement and prioritization outside SEObot, then manually deciding what to refresh and when. That is fine if you already have an SEO operator, but it weakens the “agent” promise for teams looking for a seobot alternative that improves over time.

Use this checklist to judge whether a tool actually learns after publishing:

- Tracks target keywords per URL, so you can tell whether a refresh moved position 9 to 4.

- Monitors query drift, the new queries GSC starts associating with an existing page.

- Flags CTR problems (high impressions, low clicks), then rewrites titles and meta descriptions for the actual query language.

- Creates a refresh queue based on opportunity, not publish date (pages ranking 4-15 usually deserve attention first).

- Prevents cannibalization by updating an existing URL when it already ranks, instead of publishing a near-duplicate post.

The compounding effect comes from choosing fewer topics with clearer intent, then revisiting the winners. A “SEObot AI” workflow that prioritizes net-new output can still work, but it often leaves easy gains on the table: the pages already earning impressions that need one more pass to capture clicks.

If you want the source-of-truth definitions for impressions, clicks, CTR, and average position, Google documents them in the Search Console Performance report guide.

4. Autonomous Publishing Workflows (Drafts, Approvals, CMS)

Google Search Console can tell you what to fix, but your workflow decides whether fixes ship. In SEObot vs Balzac, the publishing layer is where teams feel the difference between “content output” and an autonomous system that can run safely inside a real SaaS marketing process.

Most teams need three workflow modes: draft-only for review, scheduled publishing for a stable cadence, and fully automatic publishing when the guardrails are proven. A good seobot alternative supports all three without turning your CMS into the failure point.

Publishing Workflows: Drafts, Approvals, Scheduling, CMS Reliability

SEObot tends to optimize for speed: generate a post and push it to your CMS once you connect it. That works for small sites that accept occasional formatting cleanup. It gets risky when you have brand requirements, legal review, or strict templates for product-led SEO pages.

Balzac is built around a more controlled workflow. Teams can keep posts in draft for editorial review, add an approval gate, and schedule publishing so releases match product launches and campaigns. That matters when you want consistent output without surprise posts going live at 2 a.m. with the wrong CTA.

Here is what “hands-off” looks like in practice when the workflow is mature:

- Draft-first mode: the agent writes, formats, and saves as a CMS draft so a human can check claims, screenshots, and positioning.

- Approval gate: a reviewer approves or rejects, then the system publishes or queues revisions.

- Scheduling: the system assigns publish dates so you avoid bursts that confuse subscribers and social distribution.

- CMS-safe formatting: headings, lists, code blocks, and internal links survive the CMS editor without manual rework.

CMS reliability sounds boring until it breaks. WordPress, Webflow, and Ghost each handle rich text differently, and API-based publishing often fails on edge cases like embedded tables, HTML sanitization, or category and tag mapping. If your tool cannot validate content before publishing, you inherit a steady stream of “why did this render wrong?” tickets.

When buyers search seobot ai, they often picture a bot that publishes perfectly formatted posts forever. The reality is closer to DevOps: you want predictable deployments, rollback options, and review gates when stakes rise. Balzac’s workflow controls fit that model better than a set-and-publish approach.

5. Content Quality Controls That Prevent “AI Fluff”

Review gates and rollback options are useless if the content itself reads like generic “SEObot AI” filler. The real quality problem in autonomous SEO is predictable: the model writes plausible text that fails intent, repeats what already exists, and misses the on-page details Google evaluates.

In the SEObot vs Balzac comparison, the guardrails matter more than the writing model. A strong seobot alternative needs controls that push the system toward search intent, unique angles, and clean on-page SEO, then keep it from creating duplicate pages that compete with each other.

Quality Guardrails That Matter in Production

These are the controls that separate “published words” from “ranking pages”:

- Structure that matches intent: Good automation outlines around the query, then answers it fast. For “how to” queries, that means steps and prerequisites. For “best X” queries, that means criteria, comparisons, and decision guidance. Tools that default to the same intro-body-summary pattern tend to underperform.

- Intent match from real queries: Balzac’s GSC-driven loop can anchor a page to the exact phrasing users typed, then expand sections around the queries that already trigger impressions. Content-only automation often guesses intent from a topic label, which is how you get a post that ranks for nothing.

- Internal linking that reflects site architecture: Autonomous content should link into existing product pages, integration pages, and related blog posts using descriptive anchors. This is where many SEObot-style workflows fall apart: they publish isolated articles that never distribute authority across the site.

- Anti-duplication and cannibalization checks: Before creating a new URL, the system should check whether an existing page already ranks for overlapping queries. If it does, update that URL instead of shipping a near-copy. Cannibalization is one of the fastest ways to waste crawl budget and split rankings.

- On-page SEO basics done consistently: Title tags and meta descriptions should match the primary query language, not a generic headline. H1 and H2s should map to sub-intents. Images need descriptive alt text. Schema markup (for example, FAQPage or HowTo where appropriate) should be deliberate, not sprayed everywhere.

If you want a concrete standard for what “helpful” content looks like, Google’s own documentation is a good baseline. Start with Creating Helpful, Reliable, People-First Content and the Title Links guidance, then evaluate whether your automation consistently follows those rules.

The practical difference: SEObot can keep your blog calendar full. Balzac is built to keep your content library coherent, intent-matched, and updated based on what GSC says users and Google respond to.

6. Developer Experience: CLI and API Automation

Workflow controls keep your content library coherent, but developers usually want the same thing they want from deployments: repeatability, environments, and a way to run the system without clicking around. That is where SEObot vs Balzac separates cleanly. Balzac exposes automation surfaces (CLI and API) that fit CI jobs, cron schedules, and internal tooling. SEObot is primarily a UI-driven product, so it is harder to treat content ops like code.

What Developers Actually Automate With a Seobot Alternative

A developer-friendly seobot alternative lets you wire SEO content into the same places you already run production work: GitHub Actions, GitLab CI, CircleCI, Jenkins, Kubernetes CronJobs, or a simple Linux cron. Balzac’s CLI and API model supports that style of control, which matters when marketing wants autonomy but engineering still owns reliability.

Here are concrete automation patterns developers use when they have a CLI and API available:

- Environment separation: run “staging” and “production” content pipelines with different CMS targets, categories, and approval rules.

- Programmatic publishing: create drafts, update drafts, schedule posts, and publish via API calls, then log every action to Datadog or Grafana.

- Bulk operations: refresh a set of URLs that match a rule (for example, pages with falling clicks in Google Search Console), then queue updates.

- Release coordination: trigger content generation when a product release ships (for example, after a Git tag or a merged PR), so docs, changelogs, and “what’s new” posts follow a predictable cadence.

- Compliance and review gates: require an approval step before publishing, then enforce it in code instead of relying on someone remembering a checklist.

This is also where keyword tracking and GSC-driven iteration become operationally useful. If the agent can detect a drop in CTR or a page stuck in positions 4 to 15, developers can schedule an automated refresh job and route the draft to review, instead of waiting for a quarterly content audit.

SEObot can still work for teams that accept a simpler model: set topics, generate posts, connect a CMS, and publish. If you want the system to behave like an internal service with observability, permissions, and repeatable runs, Balzac’s developer tooling is the more natural fit for a SaaS team.

7. Which One Should You Pick for a SaaS Team? (3 Quick Scenarios)

SaaS teams rarely fail at publishing. They fail at picking the right battles, then shipping updates fast enough to turn impressions into clicks. If you are searching for a seobot alternative, choose based on the operating model you want: a content engine that keeps the calendar full, or an autonomous SEO agent that measures, decides, and iterates.

Three SaaS Scenarios And The Right Pick

- Scenario 1: Early-stage SaaS that needs a blog fast, with minimal process.

Pick SEObot if your main goal is steady posting volume and you can tolerate manual SEO analysis. SEObot fits teams that already know their topics (for example, “product updates,” “industry explainers,” “integration spotlights”) and want automated drafting and publishing without building a measurement loop. - Scenario 2: Growth-stage SaaS that wants compounding traffic from existing momentum.

Pick Balzac. This is the classic SEObot vs Balzac split: Balzac uses Google Search Console signals to decide what to write next and what to refresh. That matters when you already have pages sitting in positions 4 to 15, or queries with high impressions and weak CTR. A system that reads GSC can prioritize pages that are close to a breakthrough instead of guessing the next topic. - Scenario 3: Developer-led SaaS that treats content like a deployable system.

Pick Balzac if you want automation you can run and observe like any other internal service. Balzac’s CLI and API make sense when you want environment separation (staging vs production), scheduled runs, programmatic publishing, and repeatable workflows that fit CI. If your team talks about permissions, rollbacks, and reliability, a UI-only workflow will feel limiting.

People who search “seobot ai” often want a bot that writes forever. The better question is whether your system can learn: can it connect each URL to queries, rankings, and CTR, then ship targeted updates that move the needle?

If you want the safest next step, connect Google Search Console, pull your top queries by impressions, and identify the URLs ranking in the middle of page one. If that workflow sounds like your strategy, Balzac is the more complete choice for a SaaS team because it builds the automation around that loop.