SEO teams now compete on speed of execution, not just keyword strategy. Search demand shifts daily, SERP layouts change weekly, and content refresh cycles keep shrinking. In that environment, manual workflows break first: humans cannot research, draft, optimize, publish, and monitor hundreds of pages fast enough to match the feedback loop that search engines run.

This is why SEO tooling is changing shape. Traditional platforms, like Ahrefs, Semrush, and Google Search Console, still matter, but they mainly support human decision making. The next layer of tooling targets a different user: software that can act. An SEO AI agent does not stop at suggestions. It takes goals (grow clicks for a topic cluster, defend a ranking, expand long tail coverage), then executes tasks across tools and systems.

Why SEO Is Moving to Agent Workflows

Agent workflows replace handoffs with automation. Instead of a researcher handing notes to a writer, then to an editor, then to a publisher, an agent can run the loop end to end, with humans setting guardrails and approving exceptions.

- Scale: publishing and updating content across dozens or thousands of pages.

- Latency: reacting to ranking drops, competitor pages, and new queries in days, not weeks.

- Consistency: applying the same on page rules and internal linking logic every time.

- Lower coordination cost: fewer meetings, fewer tickets, fewer bottlenecks.

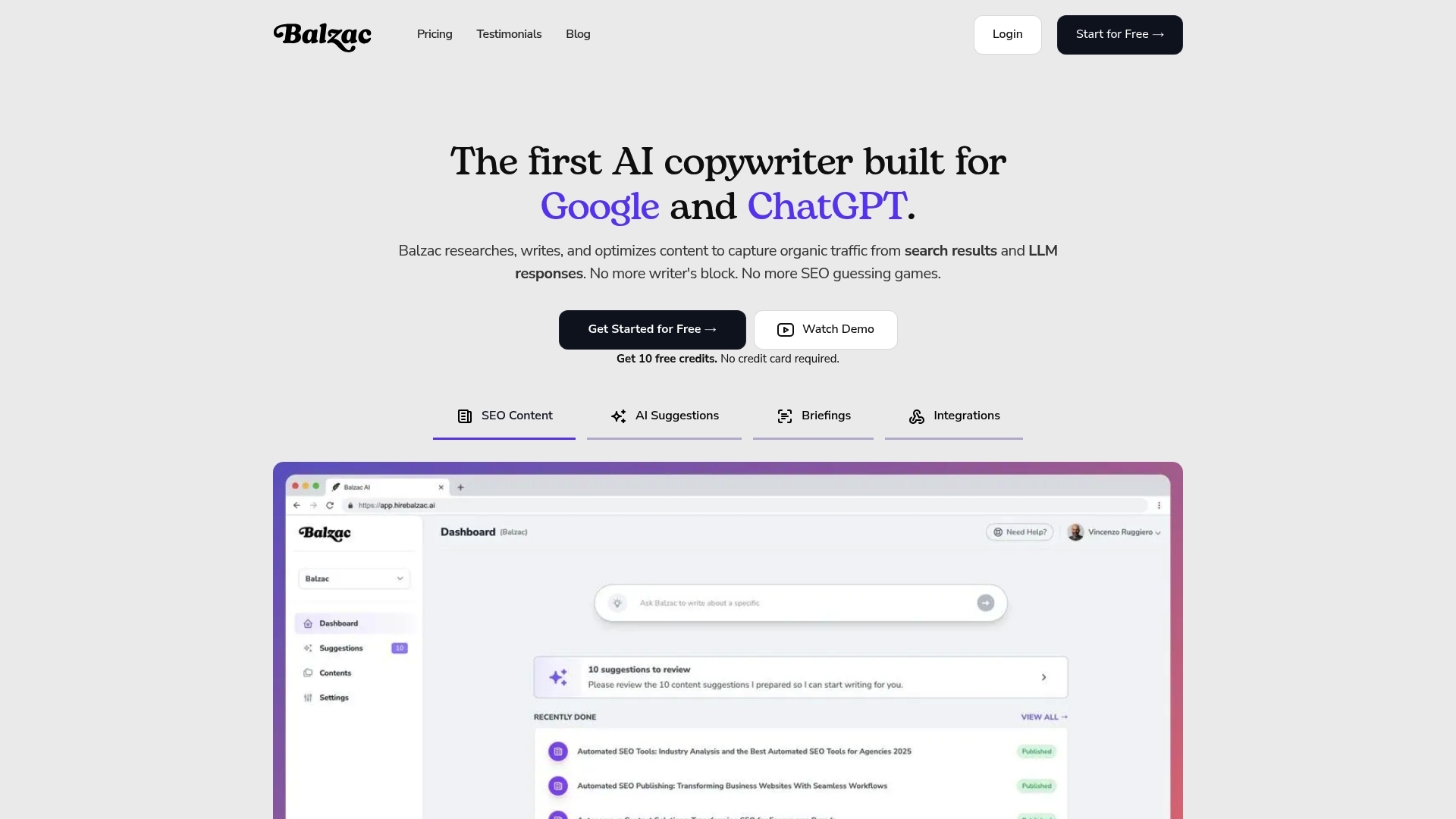

Tools like Balzac sit in this shift by automating research, writing, and publishing in a controlled loop. The rest of this article explains what SEO AI agents are, how they work, and how to evaluate them without losing quality or safety.

What Are SEO AI Agents?

Section 1 described the shift: SEO work no longer stops at analysis and recommendations, it now moves into execution. SEO AI agents sit in that execution layer, because they do not just suggest what to do, they run the workflow with minimal human input.

Definition: An SEO AI Agent

An SEO AI agent is software that plans, executes, and iterates SEO tasks based on goals, constraints, and feedback from real data. It acts more like an operator than a tool. It can decide what to do next (within guardrails), call other systems through APIs, and measure outcomes.

In practical terms, an SEO agent can handle tasks such as keyword research, SERP analysis, outline creation, drafting, internal linking suggestions, publishing to a CMS, and performance monitoring, then it uses results to choose the next topics or updates.

How SEO AI Agents Differ From Chatbots

A chatbot answers prompts. An agent completes objectives. Chatbots like ChatGPT can help you write a meta description or brainstorm titles, but they usually stop at the response. An agent continues after the message, it fetches inputs, applies rules, and pushes work into production systems.

How SEO AI Agents Differ From Copilots

Copilots keep a human in the driver seat. Tools such as Ahrefs (an SEO tool) and Semrush (an SEO suite) increasingly add AI features, but the user still performs the sequence: choose the keyword, create the brief, write, publish, measure.

An agent flips that model. The human sets strategy and constraints (topics to avoid, tone, conversion goals, brand rules), then the agent runs the loop and asks for approval only when risk or uncertainty is high.

How SEO AI Agents Differ From Traditional SEO Suites

Traditional suites specialize in visibility and reporting, not action. They excel at telling you what happened and what might work next. Agents connect insights to production by integrating with systems like WordPress, Webflow, or Shopify, then shipping changes at scale.

What “Autonomous” Actually Means in SEO

Autonomy in SEO does not mean no control. It means the agent can operate continuously within rules such as:

- Clear objectives (grow non branded clicks, improve ranking clusters, refresh decaying pages)

- Guardrails (brand voice, compliance, sources allowed, topics excluded)

- Verification (fact checks, plagiarism checks, on page SEO checks)

Balzac fits this definition by generating SEO content and publishing it with minimal human involvement, while keeping teams focused on the constraints, approvals, and performance targets that matter.

How SEO AI Agents Work: From Research to Publishing

Most SEO AI agents run a simple loop: they decide what to do, collect evidence, create output, validate it, ship it, then measure results and adjust. The difference from a standard content tool is execution. The agent treats SEO as a system with inputs and feedback, not a one time draft.

The Core Agent Loop

- Plan: The agent sets a goal, such as growing clicks for a topic cluster or refreshing decaying pages, then selects the best next actions.

- Gather Signals: The agent pulls data from sources like Google Search Console (queries, pages, CTR), Google Analytics (engagement, conversions), SERP snapshots (intent, features), and competitor pages (structure, coverage).

- Generate: The agent drafts outlines, headings, and copy that match the inferred intent, while embedding entities and related questions that users search for.

- QA: The agent checks accuracy, policy risk, duplication, and on page requirements (title rules, internal links, schema, images, citations).

- Publish: The agent pushes the page to a CMS like WordPress or Webflow, adds metadata, and submits the URL for indexing.

- Monitor: The agent watches indexation, ranking movement, and CTR shifts, then flags anomalies (sudden drops, cannibalization, traffic with no conversions).

- Iterate: The agent updates content, tests new titles, expands sections, or merges overlapping pages based on measured outcomes.

What Data An SEO Agent Needs to Work

An SEO agent performs best when it can ground decisions in first party performance data plus live SERP context. Common inputs include:

- Search performance: clicks, impressions, CTR, average position, query to page mapping (Google Search Console).

- Site analytics: bounce rate proxies, time on page, conversion events, assisted conversions (Google Analytics).

- Crawl and indexation: status codes, canonicals, robots rules, sitemap coverage (often via a crawler plus Search Console).

- Content inventory: URLs, topics, last updated date, internal link graph.

- External context: competitor URLs, SERP features, People Also Ask questions, authoritative references such as Google Search guidance.

Where Agents Usually Fail Without Guardrails

Agents break when they generate without evidence. Teams reduce that risk by forcing retrieval before writing, requiring citations for factual claims, and running automated checks against brand and policy rules. Tools like Balzac fit this loop by automating research, drafting, QA steps, and publishing, while keeping humans in the approval path when risk rises.

Why Tools Are Being Built for Agents Instead of Humans

Teams adopt SEO agents because manual SEO cannot match the speed of modern SERP change. An agent can research, draft, publish, and measure in one continuous loop, while a human workflow waits on handoffs and calendar time. This shift happens because economics and operations reward automation, not because humans lost strategic value.

Speed Beats Perfect Planning

Search engines and competitors create a fast feedback environment. Pages rise and fall based on fresh content, intent alignment, and internal linking changes that happen constantly. Agent based tools shorten cycle time by turning “insight” into “shipping work” quickly, which matters more than producing the perfect brief.

Scale Changes the Unit Economics of Content

Once a site targets hundreds of long tail queries, content becomes a production system problem. Humans can still do high value pages, but they struggle with coverage and refresh volume. Agent first tools reduce marginal cost per page by automating repeatable tasks such as:

- Keyword expansion and clustering

- SERP pattern detection (formats, intent, headings)

- On page SEO rules (titles, schema suggestions, internal links)

- Updating decaying pages based on performance data

Feedback Loops Require Continuous Monitoring

Traditional SEO stacks focus on reporting to humans. Agents need data they can act on without waiting for interpretation. That usually includes Google Search Console for queries and CTR, analytics events for conversions, and rank tracking. With machine readable signals, an agent can spot drops, create an update, republish, then watch outcomes.

Coordination Costs Are the Hidden Tax

Human SEO work often fails because of operational drag, not lack of skill. Each extra handoff adds delays and errors: briefs get outdated, writers miss constraints, editors change intent, publishers forget internal links. Agent workflows remove many of these steps by centralizing rules and execution in software.

Why Vendors Shift to “Agent First” Design

Tools built for agents prioritize APIs, automation hooks, and measurable outputs, because agents cannot click dashboards all day. That is why many platforms now expose programmatic access (for example, Google Search documentation and APIs) and why autonomous tools such as Balzac focus on end to end publishing instead of isolated suggestions.

The New “Agent-First” SEO Stack (APIs, Guardrails, and Evaluators)

To run the loop described in the last section at scale, vendors now build for software operators, not for dashboards. An agent first SEO stack exposes actions through APIs, enforces governance before publish, and proves quality with evaluators and logs.

Programmatic Interfaces: The Agent Needs Buttons It Can Press

An agent cannot work end to end if it cannot read and write to the systems that hold SEO truth. That means vendors need reliable APIs or native connectors for:

- Performance data: Google Search Console, Google Analytics.

- Site state: CMS (WordPress, Webflow, Shopify), sitemaps, robots.txt, canonical tags.

- Indexing workflows: sitemap pings, URL inspection submissions where allowed.

- Content operations: drafts, revisions, approvals, internal links, schema fields, image alt text.

If a tool only exports a CSV or shows a chart, an agent cannot close the loop without a human.

Guardrails And Governance: Define What The Agent Must Never Do

Autonomy works only with hard constraints. In SEO, governance needs to sit before writing and before publishing:

- Topic and claim rules: banned topics, medical or financial thresholds (YMYL), no unverifiable promises.

- Source policy: retrieval required for facts, citations required for numbers and definitions.

- Brand rules: tone, terminology, competitor naming, legal disclaimers.

- Change control: limits on updating live pages, approval required for high traffic URLs.

Agents like Balzac become useful when teams can set these constraints once, then let the system enforce them repeatedly.

Evaluators: Automated QA That Scores Output Before Google Does

An evaluator is a check that answers, is this page safe and good enough to publish using consistent tests. Common evaluator types include:

- Factuality checks: does each factual claim map to a retrieved source.

- Search intent fit: does the outline match the current SERP patterns and questions.

- Duplication and cannibalization: does this overlap existing site pages or target the same query set.

- On page requirements: title length, H1 rules, internal links, schema presence.

Google’s guidance on creating helpful content sets the direction for what these evaluators should reward, see Google Search Central.

Observability: Logs, Versioning, And Post Publish Attribution

Teams need to debug agent decisions like they debug software. An observable stack keeps:

- Version history of prompts, sources used, and page edits.

- Decision logs: why the agent chose a query, a title, or an internal link.

- Outcome tracking: indexation status, ranking deltas, CTR changes tied to specific revisions.

Without this, failures look like “AI wrote something weird” instead of a fixable chain of inputs and rules.

Balzac as an Autonomous SEO Agent: What It Automates End-to-End

Agent first stacks only matter if an agent can ship real pages, not drafts in a doc. Balzac fits the agent workflow by running content from topic selection through publishing, while keeping humans focused on constraints and approvals instead of repetitive production steps.

Balzac as an Autonomous SEO Agent: What It Automates End to End

1) Topic Discovery and Prioritization

Balzac starts with a concrete outcome, such as new organic clicks for a product category, then it selects topics that match that goal. It uses signals that an agent can act on, including existing site coverage, competitor patterns, and query intent signals, so it does not rely on guesswork.

2) SERP Patterning and Brief Creation

Before it writes, Balzac builds a page plan based on what ranks now. This matters because Google rewards pages that satisfy intent and format expectations. The agent treats the SERP as the spec, then translates that into a brief: headings, entities to mention, related questions to answer, and likely content length range.

3) Drafting With On Page SEO Built In

Balzac generates a full article draft, then applies on page requirements as part of the writing pass. In practice, this means it produces:

- Title and meta description aligned to intent and CTR patterns.

- Clear heading hierarchy that matches how users scan.

- Internal link candidates based on topical relevance and site structure.

4) Quality Controls Before Publishing

Autonomy only works with verification. Balzac runs checks that reduce common agent failure modes, including duplication risk, missing sections, weak topical coverage, and brand constraints set by the user. If the agent cannot meet constraints, it should route the page for review instead of publishing blindly.

For teams that operate in regulated categories, this aligns with how Google frames quality and helpfulness in its documentation (see Creating Helpful, Reliable, People First Content).

5) CMS Publishing and Indexing Workflow

Balzac publishes directly to major CMS platforms. This is the execution layer that most SEO tools stop short of. The agent pushes the content with metadata, then supports indexation workflows through sitemap and URL submission processes available in systems like Google Search Console.

6) Monitoring and Refresh Triggers

After publishing, Balzac tracks performance signals such as indexation status, impressions, CTR, and ranking movement, then it uses those signals to trigger updates. This closes the loop described earlier: publish, measure, iterate. It matches how modern SEO works, because content needs frequent refreshes as queries shift and competitors update pages.

Key Risks: Hallucinations, Brand Safety, Search Policy, and Content Quality

Autonomous SEO only works if teams treat it like a production system with failure modes. The big risks cluster into four areas: wrong facts, unsafe messaging, policy violations, and low value pages. The control pattern stays the same: force evidence before writing, block unsafe outputs, and log every decision so you can trace mistakes.

Hallucinations And Incorrect Claims

Hallucinations happen when a model fills gaps instead of using sources. In SEO content, one wrong definition, statistic, or product detail can create customer support tickets and trust loss.

- Retrieval before generation: require the agent to pull sources first, then write only from those notes.

- Claim level citations: attach a source to each factual claim, not just a reference list.

- Confidence gates: route pages for human review when sources conflict or coverage looks thin.

Brand Safety And Legal Exposure

Brand safety issues show up as tone drift, competitor callouts, risky promises, or statements that legal teams would never approve. This matters more on high intent pages where users act on advice.

- Non negotiable language rules: banned phrases, required disclaimers, approved terminology, region rules.

- Topic fencing: block or require review for YMYL areas such as medical and financial advice.

- Template constraints: lock sections like pricing, guarantees, and policy statements to vetted text.

Search Policy And Spam Signals

Google does not ban AI content by default, it targets pages that feel scaled, thin, or made for search engines instead of people. The fastest way to create risk is to publish hundreds of near duplicates with minor keyword swaps.

- Uniqueness checks: detect near duplicates across your own site before publish.

- Intent alignment: compare draft structure to live SERP patterns, then block mismatches.

- Rate limits: cap daily publishes per topic cluster to avoid sudden footprint spikes.

Use Google Search Central as the baseline for “helpful” pages: https://developers.google.com/search/docs/fundamentals/creating-helpful-content.

Content Quality Fails At Scale

Quality drops when agents produce generic pages that miss the query intent, skip key entities, or hide the answer behind filler. Teams control this with evaluators and hard publish thresholds. Balzac fits here when teams configure rules that block publishing until the page passes checks for intent fit, internal linking, and required sections.

- Minimum information thresholds: required entities, required questions answered, required examples.

- Editorial QA sampling: audit a fixed percentage of outputs weekly, then update rules.

- Versioned change logs: store sources, drafts, and edits so you can roll back fast.

What to Measure: KPIs That Actually Prove an Agent Works

After an agent publishes, the only question that matters is simple: did it create measurable organic growth at an acceptable risk and cost. A lean scorecard keeps teams honest because it ties agent output to Search Console signals, business outcomes, and operational efficiency.

Indexation: Did Google Accept the Output?

Indexation proves the agent shipped pages that Google can crawl, render, and include. Track it in Google Search Console, and treat failures as a pipeline bug, not a content problem.

- Indexation rate: indexed pages divided by published pages (by batch and by template type).

- Time to index: days from publish to first impression in Search Console.

- Coverage errors: spikes in “Crawled currently not indexed” and canonical issues.

Rankings: Are You Winning the Intended Queries?

Rankings matter most when you measure them against the agent’s intent, not vanity head terms. Use query level data from Search Console, plus a rank tracker only if you need daily SERP change detection.

- Share of page one: percent of target queries with average position 10 or better.

- Query to page alignment: one primary query set per URL, low cannibalization.

CTR: Did the Snippet Earn the Click?

CTR turns impressions into traffic, and it often improves faster than rankings. Track it per query and per URL, and compare against historic baselines in Search Console.

- CTR delta after title or description changes.

- Impressions with flat clicks, a sign of weak snippet or mismatched intent.

Conversions: Did Traffic Create Business Value?

Conversions prove the agent supports revenue or pipeline. Measure with Google Analytics conversion events and attribution that matches your buying cycle.

- Conversion rate from organic landing pages the agent created or refreshed.

- Conversions per page, not just sessions per page.

Refresh Velocity: Can the Agent Keep Content Current?

Refresh velocity measures how quickly the system reacts to decay. An agent like Balzac earns its keep when it updates pages fast after performance drops.

- Median days to refresh after a trigger (position drop, CTR drop, new competing page).

- Recovery rate: percent of refreshed pages that regain impressions or clicks within 28 days.

Cost Per Published Page: Is Automation Beating Human Production?

Cost per published page is the clearest unit metric. Include software cost, human review time, and any editorial or compliance work.

- All in cost per indexed page (not per draft).

- Cost per conversion from agent produced pages.

Use the same scorecard for every vendor or internal build, and anchor definitions to the source systems themselves, especially Google Search Console and Google Analytics 4, so the agent cannot “win” by changing how you measure.

Where This Market Is Going in 2026: Trends and Predictions

The controls in the previous section will tighten in 2026 because agents will publish more pages with less human review. Teams will demand proof that an agent stays accurate, safe, and useful, not just fast. Expect the market to move toward multi agent pipelines, retrieval first writing, and harder quality scoring before anything goes live.

Where This Market Is Going in 2026: Trends and Predictions

Multi Agent Pipelines Replace One General Agent

In 2026, most serious deployments will split work across specialized agents because this reduces errors and makes debugging easier. Instead of one model doing everything, teams will run a chain that looks like this:

- Research agent: builds a source pack from approved documents and live SERP snapshots.

- Writer agent: drafts only from the source pack and the brief.

- Editor agent: checks structure, intent fit, and duplication against the site inventory.

- Publisher agent: pushes to WordPress, Webflow, or Shopify and applies metadata and schema fields.

This pattern will push vendors to expose clearer APIs and stronger audit trails, since every handoff becomes machine to machine.

Retrieval First Content Becomes the Default

Teams will treat factual grounding as a baseline requirement. Retrieval first content means the system collects evidence before it writes, and it keeps a record of what it used. Expect more platforms to integrate retrieval workflows such as:

- First party data from Google Search Console and Google Analytics

- Approved knowledge bases (help centers, docs, policy pages)

- Selective web sources, with citation requirements

This lines up with Google’s emphasis on helpful and reliable content, see Google Search Central guidance.

Quality Evaluation Moves From Spot Checks to Scored Gates

In 2026, teams will stop relying on occasional editorial audits. They will use publish gates that score each draft and block risky pages automatically. Common gates will include claim citation coverage, intent match to the current SERP, duplication risk, and brand policy compliance.

Balzac and similar tools will win when they make these gates configurable, then log every decision so teams can trace a ranking drop to a specific revision and its inputs.

Optimization Shifts Toward Refresh Velocity and Page Operations

As agents increase publishing volume, winners will focus on keeping pages current. More stacks will prioritize automated refresh triggers such as falling CTR, slipping average position, or new query variants. This turns SEO into page operations, a system that continuously updates content instead of treating publishing as the finish line.

Conclusion: How to Choose (or Build) an SEO AI Agent Without Regret

The KPI scorecard in the last section tells you if an agent works. This section tells you how to pick the right agent, or decide to build one, without locking yourself into a workflow you cannot govern.

Decision Criteria That Matter in Real Operations

Choose an SEO AI agent based on whether it can run the full loop safely: research, publish, and learn from outcomes. Demos that focus on writing quality alone hide the real failure points, which usually happen in data access, QA, and change control.

- Data grounding: Can it pull from Google Search Console and your content inventory, then cite sources for factual claims?

- Execution: Can it publish to your CMS (WordPress, Webflow, Shopify) with metadata, internal links, and schema fields?

- Guardrails: Can you enforce banned topics, required disclaimers, and approval gates for high traffic URLs?

- Evaluators: Can it score intent fit, duplication risk, and on page requirements before it ships?

- Observability: Can you see what sources it used, what changed, and why it made the decision?

- Unit economics: Does cost per indexed page beat your current human process, after review time?

Build Versus Buy: A Practical Rule

Buy when you want speed to production and a maintained workflow. Build when you need deep integration, strict compliance controls, or a custom evaluator layer that matches your internal policy. Many teams land on a hybrid: they buy an agent that can publish, then they add their own checks.

A Responsible Pilot Plan

Run a pilot that tests execution and safety, not just writing quality. Keep it small enough to audit, and structured enough to learn.

- Pick a low risk cluster: avoid YMYL topics, start with product education or glossary style intent.

- Set hard publish rules: define banned claims, citation requirements, and rate limits.

- Connect measurement: lock KPI definitions to Search Console and GA4 so results stay comparable.

- Audit a fixed sample: review a percentage of pages weekly, then tighten rules and templates.

What “Without Regret” Looks Like

You avoid regret when you treat the agent like software in production: controlled inputs, scored outputs, and logged decisions. Tools like Balzac fit that model when you need an agent that can generate, QA, and publish with minimal human intervention, while still routing risky pages to review. For policy and quality baselines, align your checks with Google’s guidance on helpful content: Google Search Central.